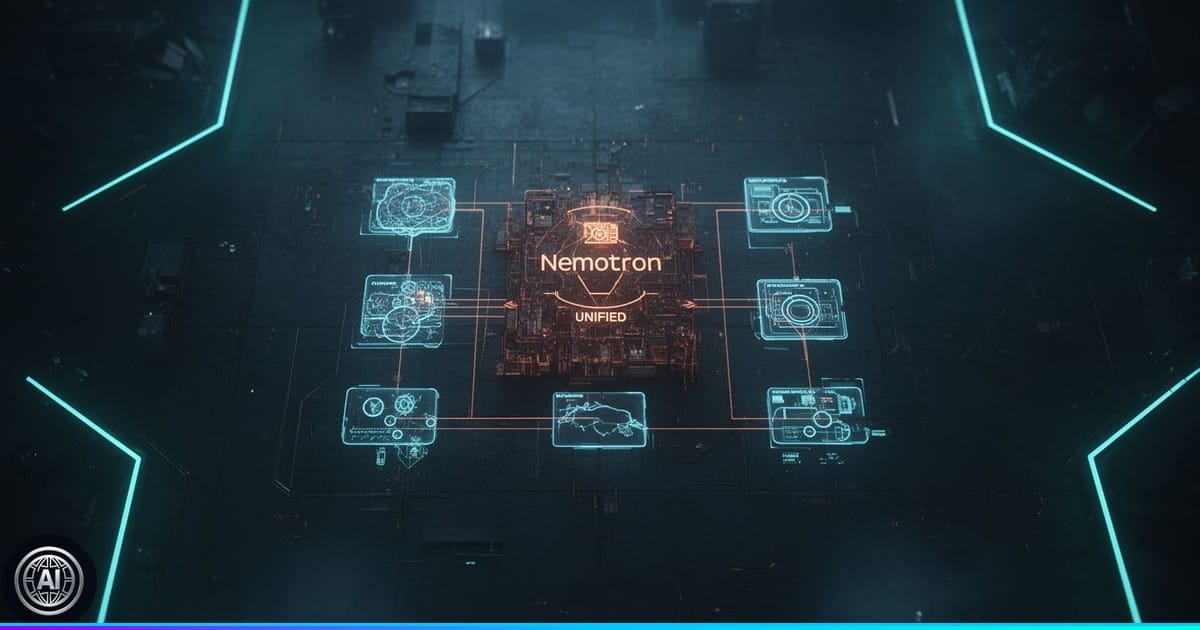

Unified Perception for Simpler Agent Design

NVIDIA Nemotron 3 Nano Omni’s availability on Amazon SageMaker JumpStart signifies a shift toward unified multimodal AI. Unlike previous approaches that required piecing together specialized models for each data type—video, audio, image, and text—this model handles them all, generating text output. This consolidation dramatically streamlines the development workflow for creating AI agents that need to understand and react to complex, real-world scenarios. According to technical reports, it operates with a Mamba2 Transformer Hybrid Mixture of Experts (MoE) architecture, featuring 3 billion active parameters, and supports a substantial 131K token context length for advanced reasoning capabilities.

Cloud-Centricity and Trade-offs

While Nemotron 3 Nano Omni offers a unified multimodal experience through its cloud deployment on SageMaker JumpStart, this approach centralizes processing. This reliance on cloud inference means enterprises trade the potential for on-device privacy and reduced latency, which could be achieved with decentralized or federated architectures, for convenience. The direct consequence is potentially increased operational costs and a single point of failure for sensitive enterprise data when designing agentic applications, such as those navigating graphical user interfaces or managing document compliance workflows.

📊 Key Numbers

- Total Parameters: 30 billion

- Active Parameters: 3 billion

- Context Length: 131K tokens

- Throughput: Up to 9x higher compared to alternative open omni models

- Supported Video Input: Up to 2 minutes, 256 frames (mp4)

- Supported Audio Input: Up to 1 hour, 8kHz+ sampling rate (wav, mp3)

- Supported Image Input: Standard resolution (JPEG, PNG (RGB))

- Inference Code: Python snippets available for image, text, video, and audio inference on Amazon SageMaker JumpStart.

- Thinking Mode Parameters: Recommended for complex reasoning with Temperature: 0.6, top_p: 0.95, max_tokens: 20480.

- Instruct Mode Parameters: Recommended for general tasks like ASR with Temperature: 0.2, max_tokens: 1024, and thinking disabled for faster responses.

- Endpoint Management: Users are instructed to delete the SageMaker endpoint after use to avoid charges.

🔍 Context

The advent of consolidated multimodal AI models directly addresses the growing enterprise demand for agents capable of processing diverse data streams. This announcement fits within a broader trend of large language models moving beyond text to encompass richer sensory inputs, accelerating the development of more human-like AI interactions. While Nemotron 3 Nano Omni offers a unified solution, it faces competition from other research initiatives exploring multimodal capabilities, though many remain in earlier stages of development or require more complex integration. The timely release is driven by the increasing realization that truly intelligent agents need to perceive the world holistically, a capability that has become more feasible with recent advancements in model architectures and cloud infrastructure like Amazon SageMaker JumpStart.

💡 AIUniverse Analysis

The true advance with NVIDIA Nemotron 3 Nano Omni lies in its architectural consolidation. By integrating video, audio, image, and text processing into a single model using an FP8 precision on SageMaker JumpStart, it significantly lowers the barrier to entry for developing sophisticated multimodal AI agents. This reduces the engineering overhead previously required to orchestrate disparate models, enabling faster iteration and deployment of applications from customer service analysis to GUI navigation.

However, the inherent shadow of this development is its complete reliance on cloud-based inference. While offering convenience and scalability, this model centralizes sensitive data processing, raising concerns about long-term costs, data privacy, and vendor lock-in for enterprises. The “Thinking” mode, with a temperature of 0.6 and top_p of 0.95 for complex reasoning, is powerful but underscores the computational demands that necessitate cloud infrastructure, potentially limiting its application in highly regulated or cost-sensitive environments. The future impact hinges on whether this cloud-centric approach can deliver demonstrable ROI and address emerging privacy regulations more effectively than decentralized alternatives.

⚖️ AIUniverse Verdict

✅ Promising. The availability of a unified multimodal LLM with 30 billion parameters on SageMaker JumpStart significantly simplifies agent development by consolidating diverse data processing into a single inference pass.

Developers: Developers can simplify agent architecture by replacing multiple specialized models with a single, unified multimodal model, significantly reducing integration complexity and inference latency.

Enterprise & Mid-Market: Enterprises can unlock new levels of automation and insight by enabling intelligent agents to understand and act upon complex combinations of visual, auditory, and textual information within existing workflows.

General Users: Everyday users will benefit from more intelligent and responsive applications that can understand spoken commands, interpret visual cues, and process documents seamlessly within a single interaction.

⚡ TL;DR

- What happened: NVIDIA Nemotron 3 Nano Omni, a unified multimodal LLM, is now available on Amazon SageMaker JumpStart.

- Why it matters: It simplifies AI agent development by processing video, audio, image, and text in a single pass.

- What to do: Developers can leverage this for complex agentic workflows, remembering to delete SageMaker endpoints when finished to avoid charges.

📖 Key Terms

- Mamba2 Transformer Hybrid Mixture of Experts (MoE)

- An advanced neural network architecture that combines elements of Mamba, Transformers, and Mixture of Experts to efficiently process complex data.

- multimodal large language model

- An AI model capable of understanding and processing information from multiple types of data, such as text, images, audio, and video, simultaneously.

- FP8 precision

- A data format using 8-bit floating-point numbers, optimized for high accuracy and efficiency in AI inference and training on compatible hardware.

- open model agreement

- A specific license governing the use, modification, and distribution of an AI model, often allowing for broader commercial application.

Analysis based on reporting by AWS ML Blog. Original article here.